|

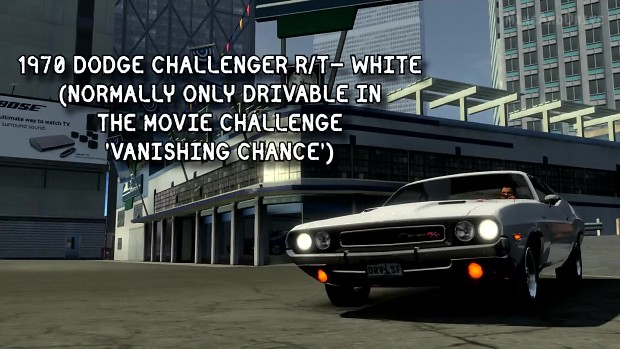

Driver San Francisco More Cars Mod. Driver San Francisco More Cars Mod 4,0/5 7626 reviews. The ASYM Desanne is an unlicensed vehicle in manufactured by the fictional car brand, ASYM. It is only spotted during missions and as it is unlicensed, is able to explode.

conn = pyodbc.connect('DSN=HiveConnection UID=username PWD=password ', autocommit=TRUE) df = pd.read_sql("SELECT * FROM myTable AS m WHERE m.file = 'myfile' ", con=conn) returns everything just fine. Resolved existing issues to meet the user requirements Added functionalities to the ongoing project to export data based on user selection to the CSV and JSON file formats Technologies: C#, Python, Microsoft SQL Server, Oracle, PL/SQL, JSON, Dask, GitHub, Docker conn = pyodbc.connect('DSN=HiveConnection UID=username PWD=password ', autocommit=TRUE) df = pd.read_sql("SELECT * FROM myTable AS m WHERE m.file = 'myfile' ", con=conn) returns everything just fine. Resolved existing issues to meet the user requirements Added functionalities to the ongoing project to export data based on user selection to the CSV and JSON file formats Technologies: C#, Python, Microsoft SQL Server, Oracle, PL/SQL, JSON, Dask, GitHub, Docker

Advanced usage of callbacks. To_sql() on each pandas dataframe section is totally fine, if you include the if_exists="append" parameter. Tests added / passed Passes black dask / flake8 dask latest overview of the state of the PR: #6038 (review) I basically followed the implementation suggested by here: #943 (comment) I believe calling. For the investigation of the benchmark, i.e., SKA SDP Mid1 pipeline, the data processing astronomical algorithm of SKA is suggested to be. The last one, called MLlib, provides some APIs for distributed machine learning. Spark SQL, the third module, enables Spark's efficient parallelism to be applied into the massive data query domain. Well organized and easy to understand Web building tutorials with lots of examples of how to use HTML, CSS, JavaScript, SQL, PHP, Python, Bootstrap, Java and XML. The usage-stylesheet.py example (hosted on the dash-cytoscape Github repo) lets you click to change the color of a node to purple, its targeted nodes to red, and its incoming nodes to bl In fact, you can even use them to update other Cytoscape arguments. Gallery About Documentation Support About Anaconda, Inc. Dask is a flexible parallel computing library for analytics. Df.isnull().values.any() (2) Count the NaN under a single DataFrame column: Modeling dask Open Sourceposted by RAPIDS OctoSummary Some time ago, Matthew Rocklin wrote a post on Numba Stencils with Dask, demonstrating how to use them for both CPUs and GPUs. Init , callable or array-like of shape (n_clusters, n_features), default=’k-means++’ Method for initialization: The number of clusters to form as well as the number of centroids to generate. Parameters n_clusters int, default=8. Read more in the User Guide. San Francisco More Cars Mod Driver And MonitorDask distributed has support for TLS/SSL communication, providing mutual authentication and encryption of communications between cluster endpoints (Clients, Schedulers, and Workers). You can integrate an external logging driver and monitor your app log files in one place. You don’t need to remember all the specific paths where your app and its dependencies store log files and write custom hooks to handle this. Out of the box, you have a unified way to read log files from all running containers. If you've not had the pleasure of playing it, Chutes and Ladders (also sometimes known as Snakes and Ladders) is a classic kids board game wherein players roll a six-sided die to advance forward through 100 squares, using "ladders" to jump ahead, and avoiding "chutes" that send you backward. This weekend I found myself in a particularly drawn-out game of Chutes and Ladders with my four-year-old. In this deck from FOSDEM'19, Christoph Angerer from NVIDIA presents: Rapids - Data Science on GPUs. Become a Data Scientist in 2020 with these 10 resources read more Data Science Programming Use Iterators, Generators, and Generator Expressions By Rahul Agarwal 28 November 2020 Airflow overcomes some of the limitations of the cron utility by providing an extensible framework that includes operators, programmable interface to author jobs, scalable distributed architecture, and rich tracking and monitoring capabilities. Apache Airflow is an open source platform used to author, schedule, and monitor workflows. This tutorial covers the well known SQLAlchemy Core API that has been in use for many years. An indexing column is required, and one may pass explicit division markers, or else a separate query will be run to find them. dd.read_sql_table does implement a number of features of its pandas equivalent, including the ability to use SQLalchemy objects in at least some situations. Python has become the lingua franca for constructing simple case studies that communicate domain-specific intuition therein, codifying a procedure to (1) build a model that apparently works on a small subset of data, (2) use conventional. As the community of data science engineers approaches problems of increasing volume, there is a prescient concern over the timeliness of their solutions. The RAPIDS project is the first step in giving data scientists the ability to use familiar APIs and abstractions while taking advantage of the sa Download hgih sierra installer for older macThis function is a convenience wrapper around read_sql_table and read_sql_query (for backward compatibility). Read SQL query or database table into a DataFrame. Familiar API and in-memory computation Scales from a laptop to thousands of computers Parallelizes libraries like NumPy, Pandas, and Scikit- Learn Stack Exchange network consists of 176 Q&A communities including Stack Overflow, the largest, most trusted online community for developers to learn, share their knowledge, and build their careers. You can see the code that handles this in the with open statement. When reading the SQL files in python, I had to strip newline characters and empty items in the list of SQL Statements. A SQL query will be routed to read_sql_query, while a database table name will be routed to read_sql_table. Using seaborn to visualize a pandas dataframe. In this SQL tutorial , you will learn SQL programming to get a clear idea of what Structured Query Language is and how you deploy SQL to work with a relational database system. This SQL Tutorial for Beginners is a complete package for how to learn SQL online. SQL Tutorial for Beginners. Note 1: While using Dask, every dask-dataframe chunk, as well as the final output (converted into a Pandas dataframe), MUST be small enough to fit into the memory. It's clear that some complex analytical tasks are still best handled with other technologies like the good old relational database and SQL. Microsoft exam 70-765 (aka "Provisioning SQL Databases") is one of two tests you must pass to earn a Microsoft Certified Solutions Associate (MCSA): 2016 Database Administration certification. If your result is too large for your ram, check out dask which lets you use larger-than-memory dataframes much like pandas’ dataframes. ~15M rows shouldn’t be too large to fit in ram (I would guess). Iirc pandas.read_sql(queryString, connectionObject) will return a dataframe of what your database returns. The previous chapter covered basic callback usage. This is the 4th chapter of the Dash Tutorial. Business intelligence (BI) tools can help you parse numbers and data, but it's visualization that'll help you get others to understand your conclusions. The Best Data Visualization Tools. It's also a practical introduction to Microsoft's Azure cloud platform service. Create_table ("timeseries", df. From dask_sql import Context from dask.datasets import timeseries # Create a context to hold the registered tables c = Context # If you have a cluster of dask workers, # initialize it now # Load the data and register it in the context # This will give the table a name df = timeseries c. We use the timeseries random data from dask.datasets as an example. Just getting started?Example.

0 Comments

Leave a Reply. |

AuthorBrian ArchivesCategories |

RSS Feed

RSS Feed